Last updated on July 29th, 2022 at 08:13 pm

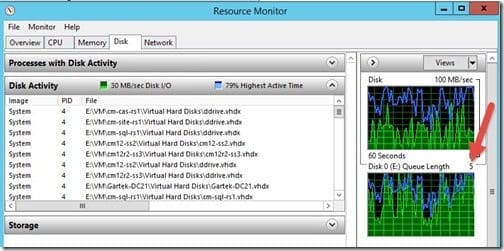

The other day, I was working on my test SQL 2016 server and everything was “slow.” Since this was a virtual machine (VM), and we all know that disk I/O (input/output) is the number one killer of VM performance, I quickly looked at Resource Monitor. I saw that the host’s Disk Queue Length was over 50! Normally, it should be a max of 5 (red arrow) when I have all of my test labs up and running at the same time. In this post I show you how I fixed the problem and solved the mystery of, “Why are my virtual machines slow?”

Having a disk queue length of 50 told me right away that one or more of my VMs was writing a lot of data to the disk!

Unfortunately, I’ve seen this problem before. So, it didn’t take me that long to track down which VM was the culprit.

Virtual Machines Slow

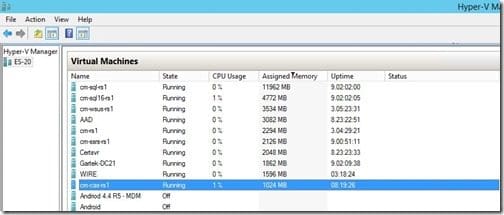

All of my VMs are set to use Dynamic Memory. This means that they rarely have a multiple of 512 MB of memory assigned to them. I opened Hyper-V Manager and looked for any VMs that were assigned the default startup RAM size of 1024 MB. This generally indicates a problem.

I found that the highlighted VM (cm-cas-rs1) was at fault.

On a side note, I love how cm-cas-rs1 was only using 1% of the CPU for this server. If you looked at only the CPU usage you would think that everything was great! Heck all of my VMs are only using 2% of the CPU on the host server.

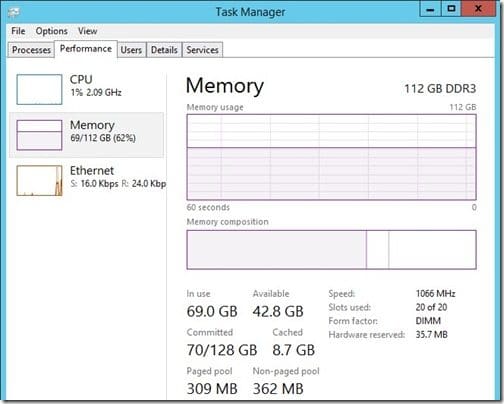

Also, if you only looked at the memory for the host server, you would see that it had tons of extra RAM. Clearly this is not the problem!

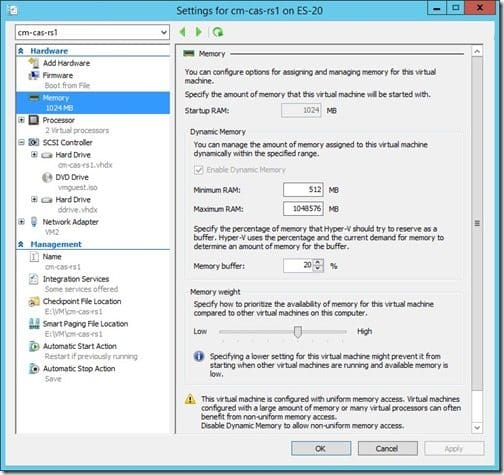

Next, I confirmed that the VM was set for Dynamic Memory.

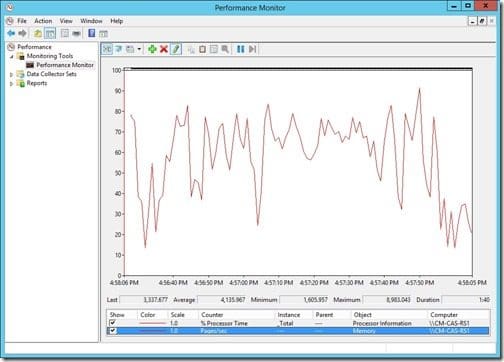

Now that I confirmed that Dynamic Memory was enabled, I logged onto the VM and opened Performance Monitor.

I wanted to take a look at the average pages/second, so I added the Pages/sec performance counter. Notice how the average Pages/sec is over 4000! This is a HUGE number! The average should be less than 50.

By the way, if Operations Manager is running, an alert is triggered when this counter is over 50 pages/second for more than 5 minutes.

How do you fix this problem?

You have two options:

1. The easy fix is to reboot the server. Yes, it is that simple. BUT, I also found that it could take two or three reboots before things get back to normal.

2. The harder fix is to re-apply the Integration Services for Hyper-V.

Once I rebooted the server, everything went back to normal and my other VMs were no longer “slow.” I hope you find these tips useful. If you have any questions about this post, “Why Are My Virtual Machines Slow,” please leave a note in the comment section below or contact me @GarthMJ.